We all live with fear of “what if my server crashes” — how quickly and easily i can get my server up and running with backup data. I have already discussed in my earlier article here the right tool and strategy to use. Part of that strategy is to setup a correct retention policy. In this article, will share how you can setup that on GCP storage and let platform auto-delete the older backups/files, so that we are not unnecessarily paying on for archive data.

Steps to setup…

Step1 — Go to GCP Cloud Storage and select your bucket on which you wish to apply the policy. And click on tab ‘Lifecycle’. This is where we will add a rule to auto-delete the object based on their life.

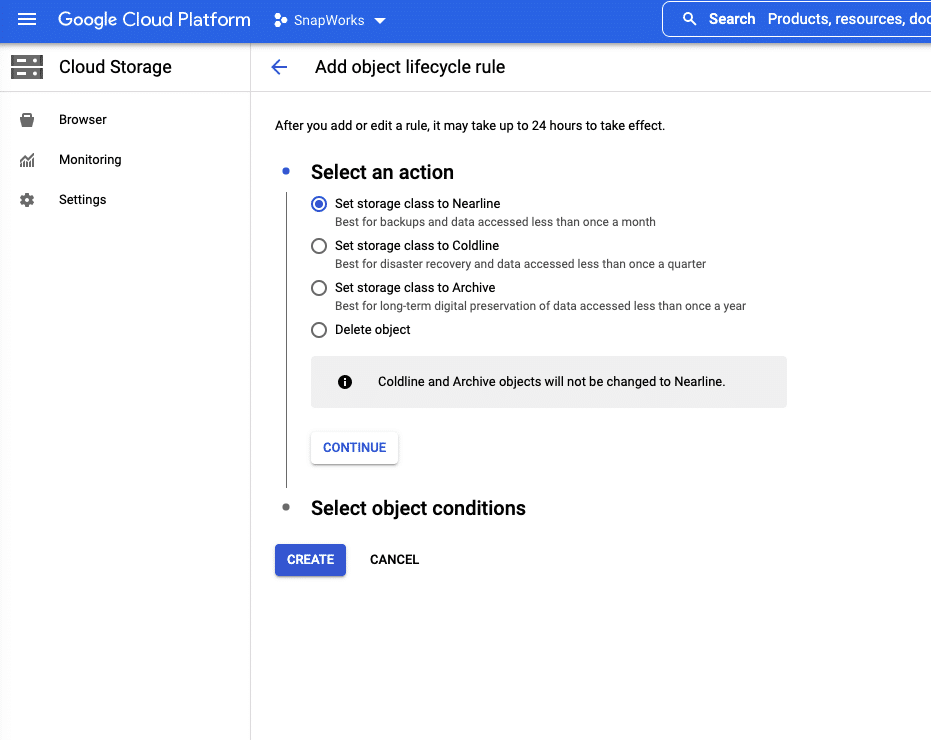

Step2 — Click on ‘Add a Rule’. Here you can select action, as in what do you want the application to do with your object. Delete them or Archive or set to other Storage class. You can choose to set to other storage based on your requirement.

Archive — This is for long term preservation. If you feel at some point you you may need some older backup you can choose this option.

For the purpose of this tutorial, we will share steps ‘Delete’ action. And my personal opinion this is suitable for most use cases.

Step 3 — Choose ‘Delete Object’ in step2 and click Continue. Here as we are defining the rule based on the age of the object, we will choose first option ‘Age’ and enter the number of days for which you want to keep the backup.

In my case i choose -15 days, which i feel was right for my use case.

Step 4- Click ‘Create’

Great!… we have setup the rule for lifecycle of the GCP storage object. This will ensure we are not storing heaps of data/backup just for sake of it and not paying those hard earned $$.

Entrepreneur and Technology Enthusiast | Started Varshyl Technologies, a web and mobile application development company, helping companies build and promote their digital presence. Co-founded Snapworks – a mobile first communication platform for schools. Outside VT, enjoys his morning workouts, reading biographies and golf.